The shortlist looked strong. Reach, demographics, brand safety all checked out, and the team greenlit the creator partnership. Three months later, the impressions were there, the brand lift was there, and not a single thing had changed in how that audience thought, felt, or acted. That's how most youth-audience campaigns actually end. No explosion or crisis, just a quiet miss. Certain KPIs came back fine, the audience just didn't do anything differently, and the post-mortem never revealed why.

In this article:

- Why Partner Selection Is the Highest-Leverage Decision in Youth Campaigns

- How Gen Z and Gen Alpha Actually Assign Trust (And Why It Breaks Most Selection Models)

- The Partner Evaluation Framework

- Selection in Practice: Where the Process Actually Breaks

- The Most Common Selection Mistakes (And How to Avoid Them)

- Building Toward a Repeatable Selection Capability

- Selection as a Compounding Advantage

Why Partner Selection Is the Highest-Leverage Decision in Youth Campaigns

The teams that consistently win with youth audiences don't have better creative. They have better partners. Everything downstream, including the brief, measurement plan, and renewal conversion, flows from this one decision. Most brands over-invest in execution but under-invest in answering this critical question: can the partner deliver what the campaign actually needs. That inversion is where campaigns fail before they start.

What most brands underestimate is how specifically youth gaming campaigns produce value. These campaigns don't work through exposure. They work through the trust a creator has built with their community over time. That trust is what converts a brand's presence in a creator's content into purchases, advocacy, genuine shifts in brand perception. Research in esports communities confirms this directly: community engagement predicts whether fans act on a brand's presence. Passive viewership doesn't move it.

The partner selection decision is where the campaign's mechanism of value is either present or it isn't.

No amount of creative quality or distribution budget installs it afterward.

When that community trust isn't present, the reason is usually visible before the campaign starts. For example, a creator whose community has eroded (through toxicity, through declining engagement, through a series of partnerships that felt transactional to the audience) cannot deliver that mechanism regardless of their follower count. In a controlled experiment, participants exposed to a brand collaboration in a toxic gaming environment allocated roughly 11% less spending toward the brand than participants who saw the same collaboration without toxicity present.

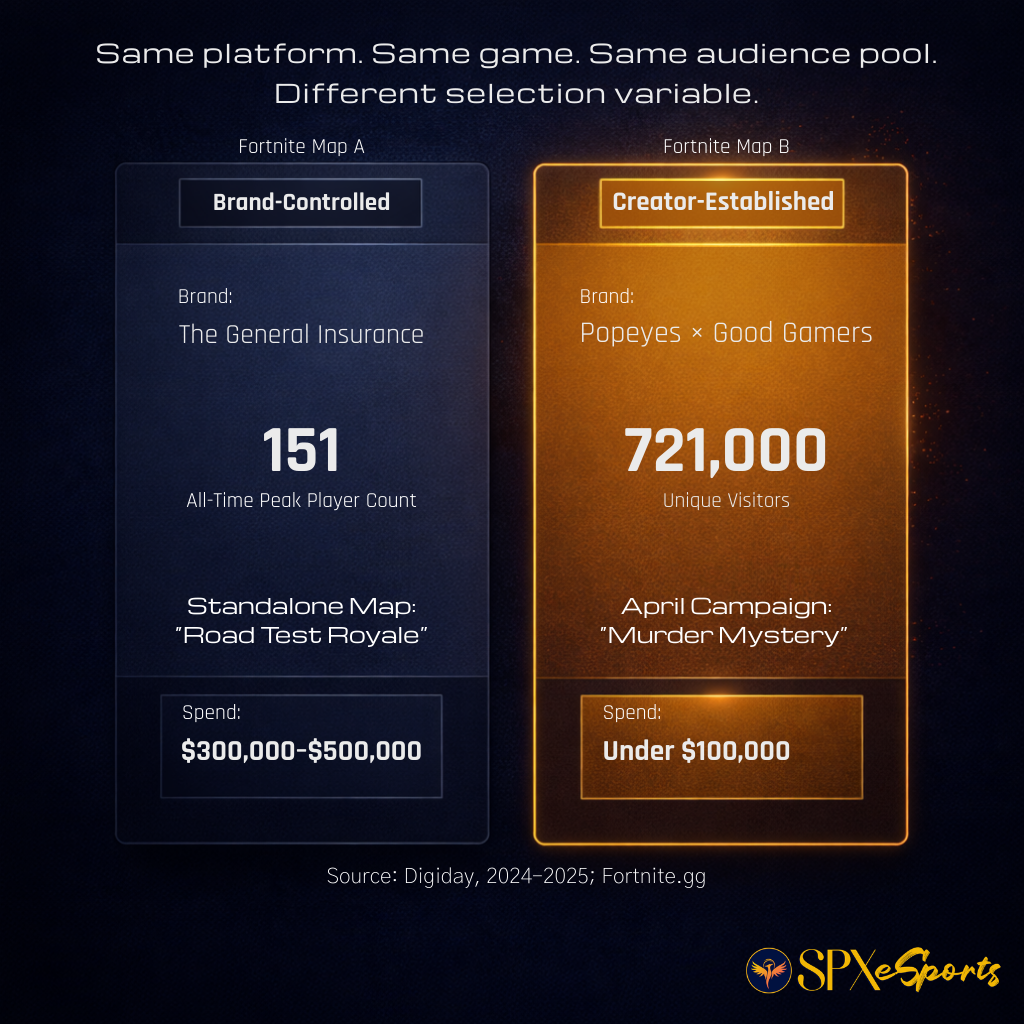

That's the cost of erosion. The complete absence of community trust carries an even steeper cost. In 2024–2025, brands spending $300,000–$500,000 on agency-built Fortnite maps bypassed established creator communities entirely. And in one case, this approach achieved peak concurrent players numbering in only the hundreds. The General Insurance's "Road Test Royale" drew only hundreds of players while 1.7 million people were playing Fortnite at the same time. By contrast, Good Gamers' integration of a Popeyes sponsorship into the established "Murder Mystery" creator map reached 721,000 unique visitors for under $100,000. Same platform. Same game. Same audience pool. The gap wasn't creative or executional. It was a selection variable.

That underperformance then becomes an organizational problem, and this is where the damage compounds. Creator spend has grown from $13.9 billion in 2021 to $29.5 billion in 2024, with projections reaching $37 billion in 2025. Budget lines at that scale don't underperform quietly; every miss gets scrutinized. When the cause is a wrong partner selection, finance doesn't see a partner selection error. All they see a Gen Z initiative that didn't return. Then, that framing follows the next proposal into the room (and the one after that).

The stakes of this decision will only increase. Gaming's player share of the global online population is forecast to stagnate through 2028, even as channel investment continues to grow. More brand teams will be competing for the same limited pool of creators who have built genuine community trust. Brand teams that treat partner selection as a repeatable discipline, rather than a judgment call assembled under deadline pressure, will hold a durable advantage in that competition. The Partner Due Diligence Checklist for Youth Campaigns builds that capability: evaluation criteria that hold before a partner is ever shortlisted, not criteria assembled to justify a choice already made.

How Gen Z and Gen Alpha Actually Assign Trust (And Why It Breaks Most Selection Models)

Most brand selection criteria are built around three questions. How big is the audience? Does it match our demographic? Is the creator brand-safe? These are reasonable questions. They are also the wrong questions. Gen Z and Gen Alpha don't assign trust the way selection models assume. They don't extend it because a creator has a large following. They don't withhold it because a creator runs sponsored content. What they're watching for is something more specific and more observable, once you know what to look for.

What Gen Z actually responds to

Four patterns show up consistently in gaming and creator communities. The first and most important is Creative Autonomy. When a creator's voice stays intact across sponsored and organic content, the audience reads that as evidence the relationship is real. When the voice shifts, the audience registers it immediately. That shift can manifest via energy changes, stiffened phrasing, or the disappearance of the creator's natural cadence .

A creator who sounds like themselves in a brand integration and a creator who sounds like they're reading a brief are not the same partner, even if their follower counts are identical. This isn't an aesthetic preference. It's the mechanism by which trust either transfers to the brand or doesn't.

The other three patterns reinforce this one.

-

Consistency: Turning down deals that don't fit and staying in character when a brand arrives both signal to the audience that the creator's identity isn't for sale.

-

Community responsiveness: Showing up in comments, knowing the audience, and engaging when something goes wrong are all signals that the relationship is mutual, not transactional.

-

Vulnerability: For example, losing a game on stream and responding with honest, genuine emotion builds the kind of attachment that polished, brand-safe creators rarely achieve. That attachment is what sponsors are actually needing access to. And it can't be discovered in any media kit.

These four patterns share a common logic. Each one is evidence that the creator hasn't changed who they are in exchange for commercial access. That's what the audience is testing for. When they see the evidence, trust holds. When they don't, it doesn't matter how large the following is.

Where Gen Alpha overlaps, and where the picture is still forming

Gen Alpha presents different conditions. The media pathways are distinct. These younger audiences are forming relationships with creators earlier, and through different platforms, than Gen Z did at the same age. The platforms they're on, and what they're doing there, don't always match what brand teams expect from prior experience with older cohorts.

The trust pattern that's emerging is specific, and significant, to partner selection. Gen Alpha is more skeptical of traditional media figures and celebrity endorsement than Gen Z: only 21% view athletes or celebrities as role models, while more than a third identify content creators in that role. They also associate the scale of a marketing effort with inauthenticity. A large, visible campaign can register as a reason not to trust the message rather than a reason to.

At the same time, Teneo's November 2025 study of 1,000 Gen Alpha children in the US and UK found that only 22% trust information from social media as an institution, while nearly half trust their favorite creators as much as family and friends. Low institutional trust in social media. High relational trust in specific creators. That combination means a brand that reaches Gen Alpha through the wrong creator isn't just ineffective. It's actively working against itself.

What we don't yet have is a direct, methodologically sound comparison of how Gen Alpha and Gen Z form trust differently across the full age range. The pattern described above is directionally consistent with what the research shows, but it hasn't been measured head-to-head against Gen Z with validated instruments. That comparison doesn't exist yet.

The smell test problem

Youth audiences detect a transactional partnership quickly. Not because they analyze deal structures. Because the creator's behavior changes. The energy shifts. The language becomes slightly unfamiliar. The creator starts advocating for something in a way that doesn't match how they normally talk.

A 14-year-old described it without being asked about sponsorship mechanics: "I'm upset because I thought it was just a regular video, but it was just to make money." A 10-year-old explained why creators promote products: "Because they get a contract that pays them a lot of money if they do it." These audiences aren't naive. They have a working model of how brand deals function.

What breaks trust isn't the existence of a sponsorship. It's the evidence that the creator stopped being themselves to fulfill it.

The failure mode is usually quiet. A youth audience that registers a forced partnership rarely generates public backlash. The creator retains the viewer relationship, but the brand never gains entry to it. They continue watching the creator and ignore the brand. That outcome doesn't appear in a campaign report as a problem. It appears as an impression number without the behavior change behind it.

Why standard selection criteria don't catch this

Follower count tells you the size of a potential audience. It tells you nothing about whether that audience trusts the creator enough to act on a brand recommendation. Engagement rate is better, but measures volume of response, not quality of relationship. A comment section full of reactions is not evidence of community trust. It's evidence of entertainment value.

Demographic overlap confirms that the right people are watching. It doesn't confirm they're watching in a way that makes them receptive to brand messaging. Brand safety screening filters out obvious risk. It also tends to filter toward creators whose edges have been sanded off. In gaming and creator communities, credibility often lives at those edges. A creator who has never said anything controversial may also have never said anything their audience truly believes.

None of these criteria are wrong to apply. They're necessary. They're just not sufficient.

A selection model that stops there is measuring reach and risk mitigation. It is not measuring whether the trust that produces audience behavior is actually present. This means the campaign's most important variable is going undecided until launch. Identifying the metrics that do capture that variable is what KPIs for Creator Partnerships Beyond Impressions addresses directly. The trust signals identified here are also the foundation the Message Fit Test for Gen Z and Gen Alpha Campaigns is built on, because a message fit test only works if you're testing against the signals that actually drive trust, not the proxies that are easiest to pull from a database.

The Partner Evaluation Framework

Partner selection breaks down in a specific way. It starts with different people in the room are caring about different things and calling it the same conversation. One person is thinking about reach. Another is thinking about brand safety. A third is thinking about whether the creator's aesthetic matches the campaign direction. None of them are wrong. But without a shared set of dimensions, the conversation produces a decision rather than an evaluation, and those are different things.

The six dimensions below give a cross-functional team a common vocabulary for assessing the same candidate against the same criteria. It's designed to make the evaluation explicit enough that disagreements become productive. When two people reach different conclusions about a candidate, the framework tells you exactly which dimension they're disagreeing about and why.

Each dimension is a question the evaluation needs to answer before the candidate moves forward. Some dimensions will carry more weight on a given campaign than others. None of them can be skipped entirely.

Audience Alignment

The most common way a shortlist goes wrong is that it was built from a database sorted by follower count. Someone filters by category, age demographic, and rough engagement rate. The top ten names get reviewed. Three look right. One gets picked. The selection criteria was reach. The campaign's actual success depends on something else entirely.

Audience alignment is about whether the creator's community and your target audience share cultural and behavioral overlap. Viewer demographics won't reveal this to you. A 22-year-old male Twitch viewer and a 22-year-old male TikTok viewer are the same demographic. They are not the same audience. Platform and format shape who shows up and why.

Live streaming attention is distributed significantly across platforms, and the viewer behaviors differ by environment. For example, non-gaming formats now represent 22% of Twitch viewership. That's a different audience relationship than a gameplay channel, and it requires a different kind of integration. Among UK teens, TikTok Live and YouTube dominate different types of engagement. Build your candidate list from where your cohort actually is, not from where your team is most comfortable operating.

Trust Integrity

Assume you've found a creator whose audience matches. The next question is one most selection processes don't ask clearly enough: does that audience trust this creator, or do they just watch them? These are different things. They produce different outcomes.

Brands already name creator reputation and audience alignment as top selection criteria: 58% and 56% respectively. The problem isn't that teams skip this. It's how they assess it. Creator reputation gets evaluated through PR history. Audience trust gets inferred from follower count. Neither of those is the right signal.

The right signal is how the audience behaves when sponsored content appears. Do comment patterns change? Does engagement drop or go quiet? Does the audience treat the integration like an ad to ignore, or like something the creator actually endorses? A creator whose audience skips sponsored posts has reach. They don't have trust.

Youth audiences come into every brand interaction with a working model of how deals function. They're not naive about it. The question isn't whether they know there's a commercial arrangement. It's whether the creator has enough genuine credibility that the arrangement doesn't break their relationship with the audience. Smaller, more community-embedded creators tend to demonstrate this kind of trust more consistently than larger ones. If the relationship quality is closer, the community expectations are clearer. That's not a universal rule, but:

Scale and trust are not the same variable. Treat them separately.

Creative Compatibility

There's a failure mode that arrives later in the process, after a creator has been selected and the brief has been written. The integration doesn't land. The creative feels off. The creator delivers what was asked and the result feels like an ad. The team is confused because the creative was strong and the creator is talented. What happened is that the brief required the creator to operate outside their natural format. The campaign never had a chance, because creative compatibility was never evaluated.

The practical test is simple: can your brand show up in this creator's content in a way that feels continuous with what they normally do? If the answer requires the creator to change their tone, their format, or the way they relate to their audience, the integration won't hold. In the gaming creator space, the operating principle is direct: if you don't let creators be themselves, the message falls flat.

Format matters as much as voice. A creator whose audience is there for them, not for the game, is a different content environment than one doing structured gameplay. Just Chatting formats accumulated 961 million hours of viewing across platforms in Q4 2025 alone. A brand whose message lives in product demonstration doesn't belong there, not because the audience is wrong, but because the format won't carry it. Recognizing that before the brief exists saves everyone significant rework.

Youth audiences signal creative incompatibility quietly. When the integration doesn't feel right, they don't object or push back. They simply disengage, and the campaign absorbs the cost as impressions without behavior change.

Operational Reliability

Operational reliability is the dimension teams skip because it feels like an execution detail rather than a selection criterion. It isn't. It's also the hardest dimension to assess from the outside. That difficulty is structural.

The influencer marketing industry has no verified public data on creator deadline compliance, brief adherence, or deliverable completion rates. Every major annual survey measures market size, ROI, and fraud. None measures whether creators deliver what they contracted to deliver. That gap exists because brands don't publicize disputes, platforms don't publish numbers that would undermine confidence in their channel, and failures settle quietly. The data infrastructure to answer this question is currently being built. The IAB launched a cross-industry task force in 2025 with standards guidance expected by September 2026, but it doesn't exist yet.

What is assessable is compliance posture. FTC disclosure requirements are the one area where creator operational compliance has actually been measured, and the findings are instructive:

Somewhere between 80% and 89% of influencers don't properly disclose brand deals, and it's not ignorance. Creators know the requirement and deprioritize it because algorithmic penalties feel more immediate than enforcement consequences.

That pattern of knowing an obligation and choosing non-compliance when enforcement is difficult is worth weighing when evaluating a partner's operational maturity more broadly. For any campaign with under-13 reach, compliance posture is now a selection-stage conversation, not a legal review afterthought. The FTC's updated COPPA rule requires that data retention policies, security programs, and third-party sharing arrangements be documented and defensible, with most provisions taking effect April 22, 2026. A creator partner who hasn't considered what their data practices look like for under-13 audience members isn't operationally mature enough for that campaign context.

Measurement readiness belongs here too. A creator who cannot support the baseline metrics your campaign requires, including returning visitor data, live participation signals, format-specific engagement, creates a measurement problem before the campaign launches. Aligning on what's actually measurable is a partner selection conversation. Teams that treat it as a post-launch discovery tend to end up in post-mortems explaining why the attribution is inconclusive.

Risk Profile

Risk profile is the dimension that feels like it's about the past but is actually about the future. Content history matters. So does what the partnership is walking into.

Platform concentration is an underweighted risk. A creator whose entire audience lives on one platform is exposed to that platform's policy decisions, enforcement shifts, and trajectory. That exposure becomes your exposure. Twitch hours watched declined nearly 15% year-over-year in 2025. Kick grew over 100% year-over-year but fell 20% in a single quarter. A creator concentrated on a volatile platform carries that volatility into your campaign timeline and your measurement assumptions.

Community health is the risk dimension most evaluation processes skip entirely. Toxicity in gaming communities is not rare. More than a third of players in environments where it's prevalent report being directly targeted. Players who are directly targeted are the most likely to permanently withdraw from those spaces. A creator whose community has a toxicity problem is operating in an environment where the audience is being actively eroded. Brand association in these situations creates adjacency risk that has nothing to do with the creator's personal content choices.

Brand collaborations in gaming environments compromised by toxicity see their financial upside trimmed even when the brand itself is not implicated. Evaluating the environment, not just the creator, is how you catch this before it costs you.

Values Alignment

Values alignment is the dimension most often reduced to a press release exercise. It gets treated as "do we share similar stated commitments." That's not what it means here. Values alignment means: does this creator have a genuine relationship with your brand's category, and does their relationship with their audience look like the kind of partnership your brand wants to be adjacent to?

The practical test on the first question is product affinity. The operating rule from inside the gaming creator ecosystem is unambiguous: "If the creator doesn't generally like the brand or product, we don't do it." A forced endorsement is a predictable credibility break. The audience reads it. The creator's performance reflects it. The campaign absorbs the cost.

The second question is subtler. A creator who treats their audience as participants in something they're building together is a different partner than one who treats their audience as a viewership metric. That distinction matters most when your campaign's success depends on audience behavior rather than just exposure. Incentive structures are the place where this alignment either gets reinforced or quietly undermined, and Fair Incentives for Creators in Brand Partnerships addresses exactly that: how to structure compensation so the creator's incentives and their authentic relationship with their audience stay pointed in the same direction.

Putting the Framework to Work

These dimensions don't carry equal weight across every campaign. A campaign targeting tight community activation should weight trust integrity and values alignment most heavily. A campaign with significant under-13 reach should treat risk profile and operational reliability as near-disqualifying if they don't hold up. What matters is that the same dimensions are evaluated for every candidate on the shortlist, by everyone involved in the decision.

The Partner Due Diligence Checklist for Youth Campaigns translates these dimensions into a step-by-step verification process. The Partnership Brief Template for Gen Z and Gen Alpha gives your team a format for documenting the evaluation and presenting it to stakeholders who will want to know how the decision was made, not just what the decision was. The contractual implementation of creative compatibility and trust integrity is where Creative Control Clauses for Creator Partnerships and well-structured creator partnership terms become relevant. Knowing what you need from a creative autonomy standpoint during evaluation means you can build for it in the deal structure, rather than negotiating it after expectations have already been set.

Selection in Practice: Where the Process Actually Breaks

Most teams run a version of the same selection process. They pull a candidate list, review metrics, check brand safety, get alignment, make a decision. The process is familiar. The failure points are specific to gaming and creator communities and they're not where most teams expect them.

The database problem

The first break usually happens before anyone has evaluated a single candidate. The list was built by sorting a database by follower count and filtering by category. That produces a list optimized for visibility, not for the kind of audience relationship that drives behavior in gaming communities.

A third of brands identify finding the right creators as their biggest operational challenge. The ecosystem is fragmented and credibility is hard to assess at scale. The teams that solve this don't find a better database. They change the filter. Audience alignment and values alignment applied at the list-building stage eliminate candidates who would have consumed evaluation time and failed later. The shortlist gets smaller. The evaluation gets more useful.

For Gen Alpha campaigns specifically, this matters more. Platform reality for under-13 audiences is different from what most team's prior experience would suggest. More than half of children aged 5–8 already watch gaming content. Teen engagement across TikTok Live and YouTube splits by content type in ways that aren't visible from demographic data alone. A candidate list built from the team's existing relationships rather than from where the cohort actually is will miss this and the misalignment won't surface until performance data does.

The content evaluation gap

Here's a failure mode that shows up consistently: the team evaluates the creator's media kit and recent highlight content instead of watching how they actually operate over time. These are not the same thing. What the media kit won't show is how the creator handles a partnership that doesn't fit their voice, or how their community responds when the content shifts from organic to sponsored.

In gaming communities, this is legible. The comment section changes. Community members who would normally defend the creator go quiet, or say something. The Discord tone shifts. These signals are visible, but only to someone who has been watching the creator's ecosystem regularly, not to someone reviewing a deck.

Partnership structures in gaming creator relationships are moving toward months-long, open-ended arrangements rather than fixed deliverable lists. That shift changes what the evaluation needs to cover.

You're not assessing whether a creator can deliver three posts. You're assessing whether they can sustain authentic integration over a campaign arc.

The content history is the only evidence available for that question. The moment that tells you the most is one teams almost never look for: how does the creator respond when they've made a mistake? A creator who misreads their community or handles a controversy badly, and recovers with honesty rather than performance, has demonstrated the kind of relationship with their audience that holds under pressure. That's the relationship your brand is entering.

The protectiveness signal

The first conversation with a creator is where most teams make a diagnostic error. They treat it as a pitch. The creator performs enthusiasm. Both sides leave satisfied. Nothing useful was learned.

A creator who has built genuine trust with their audience is protective of it. They know which integrations have worked and why. They know what their audience will tolerate and what will register as a betrayal. And they'll push back on briefs that ask them to operate outside that understanding, because they've seen what happens when they don't. A creator who agrees to anything without pushback is telling you something. So is one who can't describe their community with any specificity.

The question worth asking directly: what's a deal you've turned down, and why? The answer reveals whether the creator has the kind of relationship with their audience that makes trust transfer possible.

Youth audiences are more attuned to disclosure than most briefs account for. Many want it clear when content is sponsored. They expect it to be stated directly in the content, not buried in a description field. A creator who hasn't developed a consistent disclosure approach hasn't fully thought through their audience relationship. That's a trust integrity signal. It belongs in the evaluation, not in the contract negotiation.

Where cross-functional alignment breaks for youth campaigns specifically

The approval process fails in a particular way for gaming and creator partnerships: the stakeholders reviewing the recommendation don't share a vocabulary for evaluating trust. Marketing has assessed creative compatibility. Finance wants to know what's measurable and what the return model looks like. Legal wants to know the risk exposure. These are legitimate questions. The problem is that the trust-based argument doesn't translate naturally into the language any of those functions use to make decisions. It can be challenging to adequately convey "this creator has genuine community credibility, which is why this partnership will work" to the finance and legal teams.

This isn't a niche problem. Only 27% of marketing leaders report having mature cross-functional operating models, with siloed structure cited as the primary obstacle.

96.6% of brands say they want documentation on how a creator was vetted before a campaign launches. Only 25.6% report actually receiving it.

The recommendation arrives without the paperwork that would let other functions evaluate it on their own terms. That's where it stalls.

Finance's version of this problem is concrete. Some gaming formats support robust measurement: returning visitor data, live participation signals, community activity. Others don't. If the campaign's measurement plan depends on data the creator's platform and format can't produce, the attribution case collapses before launch. That's a selection-stage issue that surfaces as a finance-stage problem and damages the credibility of the recommendation.

For campaigns with under-13 reach, the legal version is equally concrete. The COPPA compliance posture that belongs in partner evaluation (not legal review) becomes a cross-functional flashpoint if it hasn't been assessed before the recommendation arrives. A structured approach to legal review for creator deals addresses how to structure that involvement so it happens in parallel with evaluation rather than after it.

The recommendation that survives cross-functional review is the one that was packaged for each function before it arrived. Not because the stakeholders need managing, but because the trust argument that drove the selection needs to be translated into terms each function can evaluate against their own criteria. A completed Partner Due Diligence Checklist for Youth Campaigns does part of this work. It shows that the recommendation is the output of a structured process, not a preference. Budgeting a Gen Z campaign with a stronger cost and measurement case addresses the finance-facing translation specifically: how to build the cost and measurement case for a creator selection that finance can pressure-test without having to take the trust argument on faith.

The Most Common Selection Mistakes (And How to Avoid Them)

The framework helps. It doesn't make teams immune to the patterns that have been undermining youth gaming campaigns long before anyone formalized a selection process. These mistakes persist not because teams are careless but because each one has a logic that makes sense right up until it doesn't.

1. Selecting for reach when the campaign needs depth

This is the most common mistake, and it's easy to understand why it happens. Reach is the number that fits in a slide. It's what gets approved in a budget conversation. It's the metric everyone in the room already knows how to evaluate.

The problem is that reach and influence over behavior are different things. A creator with millions of followers and low community participation cannot unlock the audience actions that make gaming creator campaigns worth the premium over paid media. Passive stickiness doesn't move those outcomes. Community engagement does. A smaller creator whose audience is genuinely invested in what they do will outperform a larger creator whose audience treats them as background content.

The analysis of influencer marketing across over 1.8 million purchases points in the same direction: engagement quality, not scale, is what predicts ROI. The corrective isn't to ignore reach. It's to evaluate reach last, after the dimensions that actually predict behavior have been assessed.

2. Confusing the creator's brand with their audience's values

A creator can be authentic, credible, and genuinely trusted, but be trusted by an audience that doesn't share your brand's values. These are different things. They get conflated regularly.

The creator's personal brand is what they stand for. The audience's values are what they came for. Those overlap more often than they diverge, but they're not identical, and the gap between them is where brand association can go wrong even when the creator is a genuine fit.

The check is straightforward: look at how the community talks about the creator, not just how they respond to the creator. What do they say to each other? What do they bring up when the creator isn't prompting them? What do they defend? That conversation tells you more about audience values than the creator's content does. If there's a meaningful gap between what the creator represents and what their community cares about, the brand association lands in the gap.

3. Over-indexing on brand safety until the partner has no cultural credibility

Brand safety screening is necessary. Taken too far, it produces a shortlist of creators so sanitized they've lost the thing that made them credible with youth audiences in the first place. In gaming and creator communities, credibility lives at the edges. A creator who has never said anything controversial may also have never said anything their audience truly believes. The audience extends trust to creators who have a point of view, who have staked something on their opinions and been consistent about it over time. A creator who has been optimized for brand safety has often traded that away.

The distinction worth making is between content risk and community risk. Content risk is what traditional brand safety screening evaluates. Community risk is entirely different. It's the health and stability of the audience environment, and it's what most screening misses entirely. A creator with a perfectly clean content history can still operate in a community where toxicity is endemic, harassment is common, and the audience segments most valuable to your brand are quietly withdrawing. Over-indexing on the first while ignoring the second is where brand safety becomes its own form of selection error.

4. Treating partner selection as a procurement transaction

Procurement logic is efficient. It sets requirements, scores candidates against them, selects the highest scorer, and moves to contracting. For buying software or agency services, this works. For selecting a creator partner in a youth gaming campaign, it produces a relationship that the creator's audience will recognize as transactional and treat accordingly.

Gaming creator relationships that generate genuine brand value are built differently. They're structured as longer-term arrangements, open-ended, with room for the creator to develop the integration rather than deliver against a fixed list of requirements. That structure isn't possible if the selection process established a vendor relationship from the first conversation. The creator arrives knowing they're a supplier. The brand arrives expecting delivery. The audience notices.

The practical shift: enter the first conversation with the frame of "are we compatible" rather than "can you deliver what we need." What does the creator volunteer? What do they push back on? What are they excited about? What comes out of that conversation tells you more about whether the partnership will work than any capability assessment does.

5. Letting one voice override the framework

This happens in two ways. A senior stakeholder has a personal preference for a creator they've seen in other contexts. Or a team member with strong opinions argues persuasively for a candidate the framework would have filtered out. In both cases, the framework gets set aside rather than defended.

The pattern is well-documented in adjacent domains. Sponsorship research has named it variously as the "president's whim" and the "management ego trip." Findings indicate that sponsorships selected on the basis of executives' personal interests consistently underperform those selected against strategic organizational objectives.

When selection criteria remain subjective, the most senior person's subjectivity wins.

Influencer marketing practitioners have described the same dynamic without the academic framing. Mae Karwowski, CEO of Obviously, told Digiday that marketers have historically operated on a "vibes oriented" basis, choosing creators because "we just like this person" or "my kids follow this person." The organizational behavior research explains why teams don't push back: when a senior stakeholder champions a candidate, the psychological cost of dissent rises and the structural opportunity for data-driven challenge shrinks. People are less critical of high-status decision-makers and less likely to surface the signals that would complicate their preference.

The framework's value is exactly that it exists independently of any individual's opinion. When a recommendation conflicts with what the evaluation produced, the right conversation is about which dimension the candidate actually meets and which they don't, not about whether the evaluator's instinct is trustworthy. Documentation makes this possible. A completed evaluation that shows how each candidate scored against each dimension gives the framework a presence in the room that can absorb pushback without requiring anyone to argue against a senior colleague directly.

6. Moving too fast and skipping the steps that would have caught it

Launch pressure compresses timelines. The first casualty is usually the evaluation steps that feel qualitative, such as content review, community observation, and the first conversation with the creator. These get cut because they're hard to schedule and hard to justify when the deliverable isn't a document. The industry data on how compressed this gets is striking.

Over 50% of marketers spend 30 minutes or less vetting a single creator. 38.5% cite vetting being "too time-consuming" as their single biggest challenge, ranking it above measurement, discovery, and fraud. Only 9.1% describe their vetting process as scalable.

These aren't edge cases. They describe standard operating practice at most organizations. The cognitive science explains why the shortcuts are systematic rather than incidental: under time pressure, decision-makers shift from evaluating all relevant dimensions to filtering on a single salient metric. In creator selection, that metric is almost always follower count. Everything else gets deprioritized because it takes longer to assess and is harder to defend in a room that's already behind schedule.

What gets missed when these steps are skipped is almost always something that would have been visible. A creator whose community health has been deteriorating for months. A platform concentration risk that a quick metric review would have flagged. A values misalignment that would have surfaced in a thirty-minute conversation.

A framework for early warning signals that a Gen Z campaign is underperforming exists partly because of this pattern. Selection errors that weren't caught before launch tend to show up first as anomalies in week-one data, so teams need a framework for recognizing what they're seeing. Mid-campaign partnership recovery addresses what's available if that recognition comes too late. Both are downstream of a selection process that moved faster than the evaluation warranted.

If a selection mistake has already been made and the campaign is live, neither of those resources will undo the selection. What they can do is limit the cost. And if the campaign ends with questions about why it underperformed, the Post-Mortem Template for Failed Gen Z Campaigns provides a structure for diagnosing whether partner selection was the root cause. It then supports a documentation method that is candid enough so that the next selection process doesn't make the same call.

Building Toward a Repeatable Selection Capability

There's a difference between a team that has picked good partners and a team that knows how to pick good partners. The first gets credit for a successful campaign. The second has something more durable: a process that generates better decisions over time, regardless of who's in the room or how much pressure the timeline is under.

Most teams are closer to the first than the second. That's not a criticism. It reflects how creator partnerships have historically been resourced. Selection was treated as a one-off judgment call, not a capability to be built. That's changing. Nearly half of ad spenders now consider creators a must-buy channel, not a tactical experiment. The industry is calling for standardized vetting tools, consistent reporting, and audience authentication infrastructure. These are all building blocks of a repeatable selection system. Teams that build that system internally, rather than waiting for the infrastructure to arrive, will have a compounding advantage.

What makes selection a capability rather than a decision

The distinction is documentation. A team that documents its selection rationale, stating why this creator, against which dimensions, with what reservations, has raw material for improving the next decision. A team that doesn't has only the outcome.

Outcomes are unreliable teachers. A partnership can succeed despite a flawed selection process and fail despite a rigorous one.

Without the documented rationale, there's no way to know which happened. With it, each partnership, whether successful or not, can generate valuable evaluation intelligence. The creator whose trust integrity signals were strong and delivered. The one whose platform concentration risk materialized exactly as flagged. The one who looked right on every dimension and still didn't work, which means the framework needs a question it isn't currently asking. This is how teams get better at selection. Not by running more campaigns, but by learning systematically from the ones they've run.

Platform assumptions need regular revision

One of the ways selection capability decays is through assumption staleness. A team builds a strong process, runs it well, and then applies the same platform and audience assumptions twelve months later without checking whether they still hold. In gaming creator ecosystems, they often don't.

Platform attention redistributes quickly. Live streaming viewership shifts significantly year over year and within that, quarter over quarter. A creator whose audience was concentrated on a growing platform when you last evaluated them may be concentrated on a declining one now. The community health of a space you assessed eighteen months ago may look different today.

A repeatable capability includes a lightweight mechanism for revisiting these assumptions. This does not have to be a full re-evaluation every cycle. Instead, simply do a quarterly check on platform trajectory, community health signals, and any enforcement or policy changes that affect the environments your partner roster operates in. The Renewal Scorecard for Sponsorship and Creator Deals is built around exactly this kind of structured reassessment. It helps you treat renewal not as a default continuation but as a selection decision in its own right.

When to build a standing roster

At some point, evaluating from scratch for every campaign becomes its own inefficiency. The evaluation investment is duplicated. Relationships are started and dropped. Creators who have demonstrated genuine fit don't get the sustained engagement that long-term partnerships require.

The shift toward a standing roster makes sense when two conditions are met: you've run enough campaigns to know what good fit actually looks like for your brand, and you have enough documented evaluation history to make roster decisions with confidence rather than instinct. Long-term creator relationships produce better results than transactional ones. Ongoing partnerships allow for feedback, iteration, and content development that one-off campaigns can't support. The creator gets better at integrating the brand. The brand gets better at briefing the creator. The audience gets continuity rather than a rotating series of partnerships that each feel like an introduction.

A standing roster is also a hedge against the competitive pressure that's coming. Gaming's player share of the global online population is forecast to stagnate even as investment grows. Competition for the creators who have built genuine trust with youth audiences will intensify. Teams that have already established those relationships, and documented the evaluation that justified them, will be harder to displace than teams that are starting that process under pressure.

A repeatable creator partner program architecture picks up from here with the systems for moving from campaign-by-campaign selection to a managed portfolio of creator relationships. For teams whose selection capability is still developing, scaling a pilot sponsorship into a year-round program addresses the intermediate step: how to turn a single successful partnership into the proof of concept that justifies building the broader program.

Managing a portfolio of relationships also introduces a challenge that single-campaign selection doesn't: keeping brand voice coherent across multiple creators operating in different formats and communities. Brand voice consistency across creator content addresses that specifically. And maintaining organizational visibility into how those relationships are performing over time, in a format that keeps leadership informed without requiring a full review meeting, is what the Weekly Partnership Update Template Executives Read is built for.

Selection as a Compounding Advantage

Partner selection compounds in ways that aren't visible in a single campaign report. A team that selects well once has a successful campaign. A team that selects well repeatedly builds something harder to see and harder to replicate: a reputation inside creator communities that changes which creators say yes, what they're willing to do, and how much latitude they give the brand in collaborative work.

Creators talk to each other. The brands that show up with clear evaluation criteria, honest conversations about fit, and a structured approach to the relationship don't just get better performance from the partners they choose. They get access to partners they couldn't have attracted two years earlier. The selection process itself becomes a competitive asset. Not because it's proprietary or complex, but because most brands don't have one at all.

The inverse is also true, and it's quieter. Teams that treat selection as a rushed decision )by grabbing the biggest, cleanest name and moving to briefing) accumulate a different kind of reputation.

Creators learn which brands are transactional. They still say yes, because the money is real. But they hold back the thing that actually makes these campaigns work.

That thing is genuine creative investment in making the brand integration feel like something their audience would have wanted to see anyway. That discretionary effort is the gap between a campaign that hits its impression targets and one that changes how the audience relates to the brand. It can't be contracted for. It can't be briefed into existence. It's a function of whether the creator believes the brand chose them for the right reasons, and whether the selection process gave both sides enough information to know that the partnership was worth the investment before either committed.

The framework in this article won't make that judgment for you. It will make sure you're asking the questions that let you make it well.

![]()